Beyond Vibe Coding: Building Production Software with AI and Engineering Methodology

· 9 min read

The AI coding revolution has a credibility problem. "Vibe coding" — the practice of generating code through AI prompts with minimal engineering oversight — has become the default perception of what AI-assisted development looks like. The results are predictable: fragile prototypes, absent test suites, and architectural decisions made by autocomplete.

But there is a fundamentally different approach. One that uses AI within a structured engineering methodology — planning before building, testing alongside implementing, reviewing before shipping.

This article documents how two real-world brownfield projects were rebuilt from the ground up using the BMad Method and Claude Code CLI — not as vibe coding experiments, but as engineering projects with professional standards. The combined result: 62 stories, 11 epics, 1,779 tests, and zero production incidents.

The Problem with Vibe Coding

Vibe coding is seductive. You describe what you want, AI generates code, and something appears on screen within minutes. For prototypes and throwaway experiments, this works fine. For production software, it is a recipe for disaster:

No architecture: Code grows organically without structure, making changes increasingly expensive

No tests: The first refactor breaks everything, and nobody knows what broke or why

No quality gates: Security vulnerabilities, accessibility failures, and performance issues ship silently

No learning loop: The same mistakes repeat because there is no process to capture and prevent them

The fundamental problem is not AI — it is the absence of engineering discipline around it. AI amplifies whatever process you feed it: feed it chaos, you get faster chaos.

A Different Approach: BMad Method + Claude Code

The BMad Method is an AI-assisted development framework that treats AI as a team of specialized engineering agents, not a general-purpose code generator. Think of it as a virtual engineering team:

A Product Manager writes the PRD with measurable requirements

An Architect designs the technical solution with documented trade-offs

A UX Designer defines interface specifications and accessibility criteria

A Scrum Master breaks work into epics and traceable stories

A Developer implements each story following strict acceptance criteria

A QA Engineer designs the test strategy

A Code Reviewer performs adversarial multi-layer reviews

Each agent has deep domain expertise. Each artifact feeds into the next. Quality gates prevent moving forward with incomplete work. Retrospectives at every epic close the learning loop.

Combined with Claude Code CLI (powered by Claude Opus), this creates an engineering pipeline where AI operates under the same discipline you would expect from a professional team. Every line of code traces to a requirement. Every requirement traces to a business need. Nothing ships without tests, review, and validation.

The critical insight: the methodology is stack-agnostic. The same workflow applies whether you are building a web application or a mobile app. To demonstrate this, I applied it to two completely different projects.

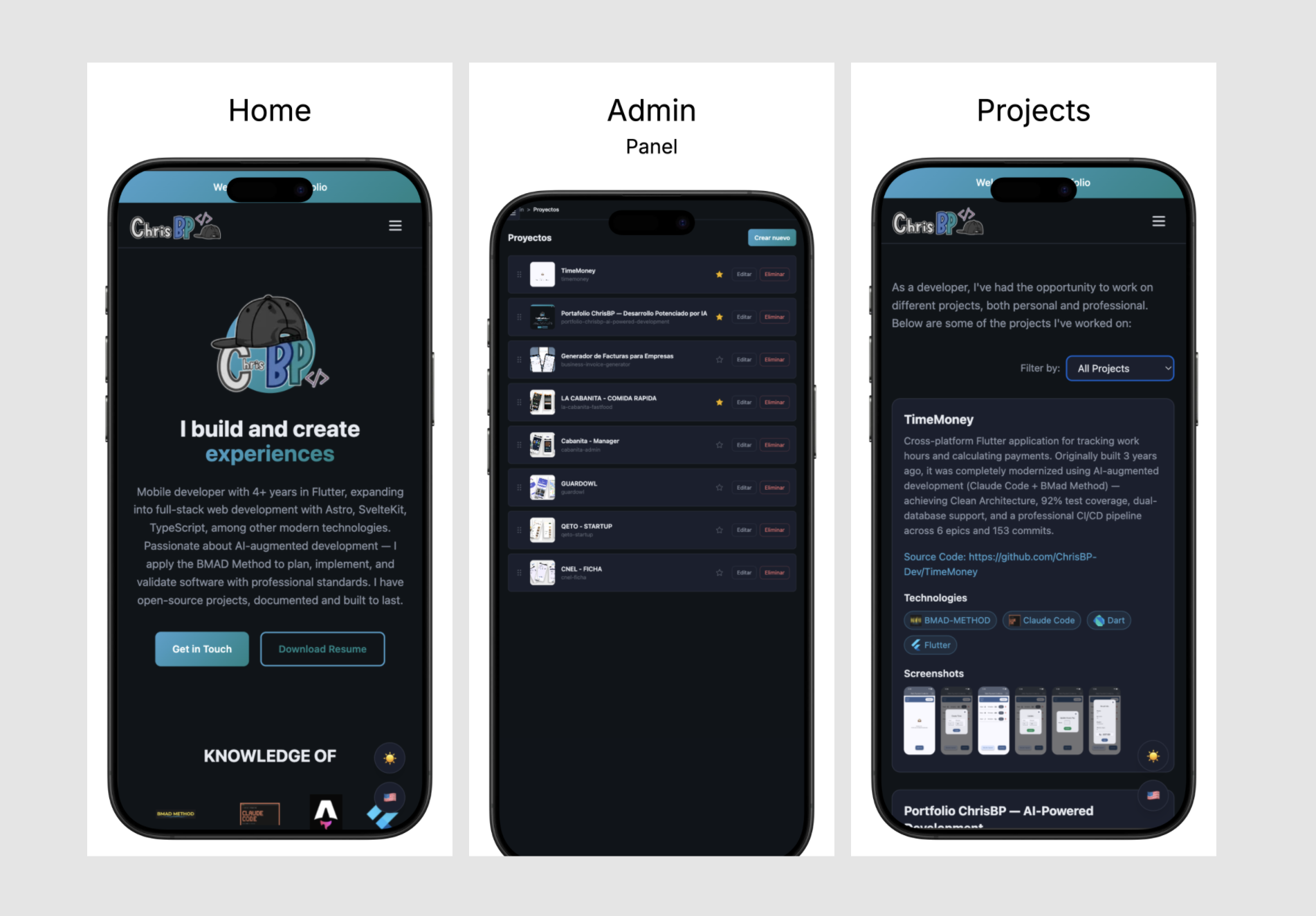

Case Study: Portfolio — Flutter Web → Modern Stack

My previous portfolio was built with Flutter Web almost 2 years ago. Flutter Web renders to an HTML canvas — search engines see nothing, screen readers cannot navigate it, and the technology had stagnated. For a developer portfolio, these were disqualifying problems.

The BMad agents produced a complete engineering specification before any code was written: a Product Brief defining vision and personas, a PRD with 49 functional and 29 non-functional requirements, an Architecture Decision Document selecting Astro 6 SSG + Svelte 5 + Firebase + TypeScript strictest + Tailwind CSS 4, and a UX Specification with 46 design requirements and WCAG 2.1 AA compliance criteria.

Implementation followed in 5 epics across 20+ hours:

Epic 1 — Foundation (10 stories): Testing infrastructure, CI/CD pipeline, Zod schemas, design system, i18n, theming. 146 tests, 54 code review patches caught.

Epic 2 — Public Site (8 stories): All public pages, data migration from Flutter schema, View Transitions API, responsive design. 388 tests total.

Epic 3 — Admin Panel (8 stories): Firebase Auth, full CRUD, ImageService with retry logic and orphan cleanup. 879 tests.

Epic 4 — Blog System (5 stories): TipTap rich text editor, bilingual content, OpenGraph cards, drag-drop ordering. ~1,279 tests. First story to pass code review with zero patches.

Epic 5 — SEO & Accessibility (6 stories): Structured data, sitemap, WCAG 2.1 AA audit with axe-core, performance optimization. 1,406 total tests, zero production incidents.

The architecture delivered measurable results: public pages ship zero JavaScript by default, with the home page at just 25.8 KB of total JS — half the 50 KB budget. TypeScript strictest mode catches type errors before runtime. Zod schemas validate every Firestore document at boundaries. Lighthouse CI enforces 95+ scores on every deployment.

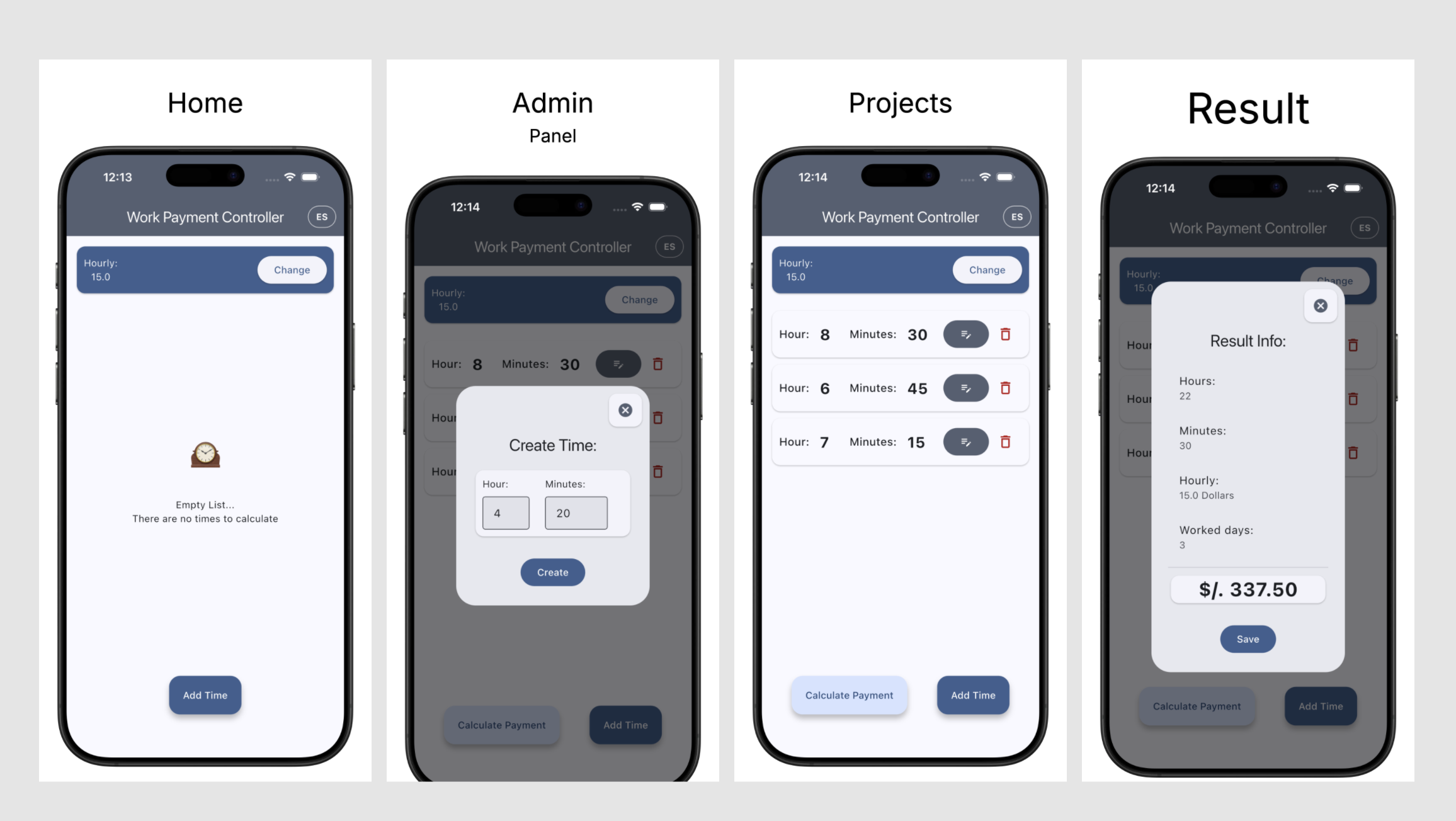

Case Study: TimeMoney — Legacy Dart → Modern Flutter

TimeMoney is a cross-platform Flutter app for hourly workers to track hours and calculate payments, running on iOS, Android, Web, and Windows. It was built three years ago with Dart 2.x, using outdated patterns and no formal architecture.

The same BMad Method workflow produced: a PRD with 24 functional and 11 non-functional requirements, an architecture document specifying feature-first Clean Architecture with a dual datasource strategy (ObjectBox for native platforms, Drift with WASM + OPFS for web), and a complete epic breakdown mapping all 53 detailed requirements to 25 stories across 6 epics.

Implementation followed across 6 epics:

Epic 1 — SDK Foundation (4 stories): Dart 2.19 → 3.11+, Flutter 3.7.5 → 3.41+, dependency resolution.

Epic 2 — Architecture (5 stories): Feature-first restructure with domain/data/presentation layers, shared components.

Epic 3 — State Management (6 stories): Dart 3 sealed classes for BLoC states, ActionState generic pattern, test infrastructure.

Epic 4 — Multi-Platform (5 stories): Drift database for web, platform-aware dependency injection via conditional imports, bilingual i18n.

Epic 5 — Testing (3 stories): 200 new tests (121% growth), widget tests, golden visual regression tests. 92.3% coverage achieved.

Epic 6 — Polish (2 stories): 8-job CI/CD pipeline, Maestro E2E with auto-generated screenshots and demo video.

The result: 373 tests at 92.3% coverage, zero deferred technical debt, 100% of use cases and BLoCs at full coverage, running on four platforms from a single codebase. The Either monad (fpdart) ensures railway-oriented error handling throughout. Sealed classes replace legacy patterns with compile-time exhaustive matching.

The Quality Compounding Effect

The most powerful pattern across both projects was the compounding quality effect — each epic improved on the previous one because retrospectives closed the learning loop.

In the portfolio project, code review patches dropped from 54 (Epic 1) to 26 (Epic 5) — a 52% reduction. Deferred items fell from 12 to 1. In TimeMoney, code review patches within Epic 5 alone dropped from 14 to 2 across three stories.

This was not because the AI model improved. It was because the process improved. Each retrospective captured what failed and what to change:

Specs based on outdated framework versions → research validation became mandatory before story creation

Zero admin E2E tests despite having test designs → the Scrum Master now verifies test tasks exist in every story

Tautological tests (verifying mocks instead of behavior) caught in review → substantive testing became an explicit review criterion

The lesson: AI does not self-improve within a project. Process does. The methodology creates a feedback loop that makes every subsequent epic more efficient and more correct than the last.

Combined across both projects: 62 stories completed, 1,779 tests written, zero production incidents, 10 epic retrospectives, and a measurable improvement trend in quality metrics from first epic to last.

Why This Is Not Vibe Coding

Let me be direct about the contrast:

Vibe coding generates code from prompts and ships whatever compiles. There is no PRD, no architecture document, no test strategy. When bugs appear, the answer is "ask the AI to fix it" — creating a cycle of patches on patches with no traceability and no learning.

Engineering-augmented development uses AI within a methodology. Every line of code traces to a requirement. Every requirement traces to a business need. Tests are written alongside implementation, not after. Code review catches what tests miss. Retrospectives prevent the same mistakes from recurring.

The difference is not the AI model. It is the process around it.

This portfolio has 1,406 tests not because AI generated them automatically, but because the methodology required them at every step. It passes WCAG 2.1 AA not because AI knows accessibility, but because the UX specification defined it as a non-negotiable requirement, the architecture planned for it, and code review enforced it.

The Direction the Industry Is Moving

Companies do not need developers who write better prompts. They need developers who can direct AI to produce production-quality software — with architecture, tests, accessibility, and maintainability. The demand is not for more code; it is for more reliable code.

The developers who thrive in this era will be the ones who bring engineering discipline to AI. Who understand that a PRD prevents scope creep, that code review catches what tests miss, that retrospectives prevent repeated mistakes, and that quality gates are not overhead — they are what makes AI-generated code production-worthy.

These are small projects — a personal portfolio and a utility app. But the methodology scales. The same BMad Method workflow that produced 62 stories and 1,779 tests across these projects can produce hundreds of stories for enterprise applications. The quality gates, the retrospectives, the adversarial reviews — these are engineering fundamentals that become more valuable, not less, when AI accelerates the pace.

Explore It Yourself

Both projects are fully open source. Every planning artifact, story file, and epic retrospective is available in the repositories:

Portfolio (Web): github.com/ChrisBP-Dev/portfolio

TimeMoney (Flutter): github.com/ChrisBP-Dev/TimeMoney

Methodology: BMad Method

AI Tool: Claude Code CLI by Anthropic (Claude Opus)

The commit histories tell the complete stories — from product briefs to production deployments. Study them, fork them, and apply the methodology to your own brownfield projects.

The future of software development is not AI replacing engineers. It is engineers wielding AI with the discipline the craft demands.